Chinese artificial intelligence (AI) company Megvii announced today that it has open sourced its deep learning framework, MegEngine, which is the core of Brain++, a new generation AI productivity platform.

MegEngine was an algorithm training and inference engine for Megvii's own internal use. It was started by three Megvii interns in 2013, and officially launched in 2014.

This self-developed deep learning framework supports Megvii's performance in international AI competitions and the implementation of the company's products and businesses over the past six years. It currently serves more than 1,400 AI developers at the Megvii Research Institute.

At the press conference, Tang Wenbin, co-founder and CTO of Megvii, officially announced that MegEngine's code is open source, and pointed out that this is a set of industrial-level deep learning frameworks integrating training and inference, and dynamic and static integration.

In traditional deep learning research and development, products from prototype to production deployment often need to design and call the training framework and the inference framework separately.

This leads to unexplained loss of performance or accuracy during the conversion of training and inference of the model, which requires developers to manually optimize, and various problems that occur when the algorithm is deployed on the computing platform cannot be traced back.

The MegEngine framework avoids such problems. Through the integration of training and inference, the process of model conversion is omitted, and the trained model can be inferred directly, and the model accuracy can be aligned across devices.

At the same time, MegEngine has built-in automatic model optimization and simplified processes, which reduces the chance of manual operation and reduces the probability of errors.

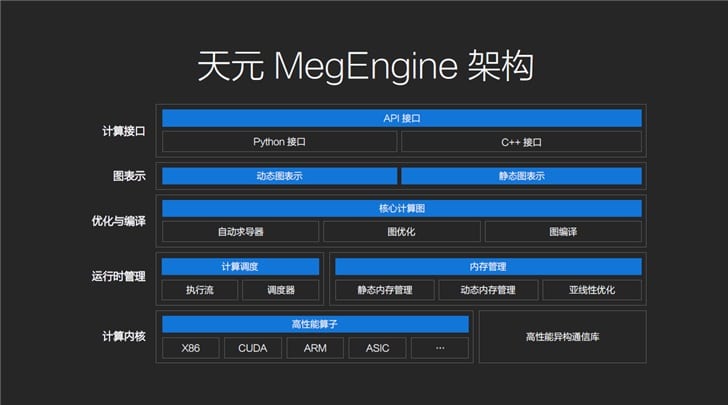

In addition, MegEngine is equipped with Python and C ++ interfaces, supports dynamic graph, static graph one-click conversion and mixed programming, and can use high-level programming language for graph optimization and graph compilation.

In runtime management, MegEngine has an execution flow and a scheduler. It uses dynamic and static memory allocation to co-exist and can obtain better memory optimization results through an automatic sub-linear memory management optimizer.

In the underlying design, MegEngine's computing kernel is compatible with mainstream computing devices and supports multi-machine multi-card and distributed training.

In order to solve the problem of model reproduction difficulty, MegEngine supports the import of PyTorch Module, which can be optimized for computer vision tasks.

At present, Megvii has released the MegEngine Alpha version of the source code on the OpenI Qizhi community and the open source community GitHub, a new generation of artificial intelligence open source platform in China.

Developers can also use the online deep learning tools on MegEngine's official website to freely call computing power, obtain the latest data sets and training scripts, and conduct simple training and trials.

At the same time, Megvii prepared an algorithm pre-trained model ModelHub for developers of MegEngine to support developers out of the box.

For the development plan of the MegEngine framework after open source, Megvii revealed that it will launch a beta version in June with the help of technology contributors.