A video restored by AI gives us a taste of old Beijing from 100 years ago.

The restored video clearly shows the hustle and bustle of city life, and the greeting is clearly visible.

Can you imagine that these color images, which vividly show the lives of nationals a hundred years ago, were restored using artificial intelligence technology? Recently, this video of using artificial intelligence to restore old Beijing images went viral in China.

On Bilibili alone, this old video restoration by blogger "Ohtani's Game Creation Hut" has received 840,000 plays and 69,000 likes.

You can watch the original video here on Bilibili.

The blogger has used artificial intelligence technology to color, restore frame rates and increase resolution on a piece of footage from the early years of the Republic, revolutionizing this 100-year-old film and giving us a more detailed look at the lives of people 100 years ago.

We were able to get a more visual feel for the restoration after comparing it with the old images.

The video restoration before and after has been greatly improved in terms of color and clarity, with a grayish haze before restoration and vibrant colors after restoration.

So how, technically speaking, did it work out so amazingly, says The blogger, who also referenced YouTube blogger Denis Shiryae's video restoration tutorial.

Three Steps to Image Restoration

Earlier this year, a video of Denis restoring the classic 1896 film also caught fire overseas.

One of the most famous short films in cinema is the 1896 silent film "L'Arrivée d'un train en gare de La Ciotat", which is simple, only 50 seconds long, and depicts a train pulling into a station.

Denis formally restored this classic short film with an AI restoration, which works very well.

From Denis's webpage, we can see that the entire restoration process is focused on three core points: the 4K resolution and the 60fps frame count, in addition to adding background color and sound effects.

DAIN Insertion Technology

In terms of adding FPS, Denis said he mainly applied the DAIN insertion technique proposed by Bao Wenbo et al. of Shanghai Jiaotong University.

This study proposes to explicitly detect occlusion by exploring the depth cue in the inset frame.

The researchers developed a depth-aware light-flow projection layer to synthesize intermediate flows (intermediate flows tend to sample objects at closer distances) and learn hierarchical features as contextual information.

The model then deforms the input frame, depth map, and contextual features based on optical flow and local interpolation kernel, and finally synthesizes the output frame.

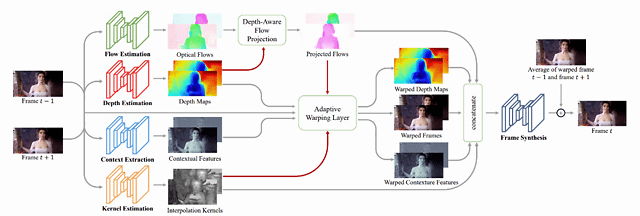

The DAIN model is structured as follows.

DAIN Architecture Diagram. Given two input frames, DAIN first estimates its optical flow and depth map, and uses the depth-aware optical flow projection layer to generate the intermediate flow.

The input frame, depth map and contextual features are then deformed using an adaptive warping layer based on the interpolation kernel of optical flow and spatial variation; finally, the output frame is generated using a frame synthesis network.

4K resolution

Since the first HD TV hit the market in 1998, "HD" has been one of the directions the technology has been pursuing.

Simply listing a few numbers, the resolution of an old standard-definition TV is only 720x480, which means 345,600 pixels of content can be displayed at a time.

HD TVs have a resolution of 1920x1080 with 2,073,600 total pixels, six times that of SD, while 4K's 3840x2160 resolution requires 8294,400 pixels.

Simply put, the video restoration process requires at least an additional 6 megapixels to accommodate 4K HD resolution, and this "interpolation" process is where AI technology comes in, supplementing the display with content based on what is presented in the adjacent surrounding pixels.

The "interpolation" process is essentially a guessing game, and the feedback would be better if AI techniques such as convolutional neural networks were left to call the shots.

In this demonstration, Denis boosted the resolution to 4K with Gigapixel AI software, developed by Topaz Labs, which is now in the commercial mature stage.

Originally developed to help photographers improve the quality of their photos by a factor of 6 without losing any detail, the process of productizing the technology found it entirely feasible to apply it to video.

It's worth mentioning, though, that rendering a few seconds of video can take hours of processing time, and those interested can give it a try.

DeOldify coloring model

And in terms of coloring, I believe most of the readers in the community are aware of a GAN-based image coloring model called DeOldify.We can see the effect of this model by the following comparison graph.

DeOldify is based on the Generative Confrontation Network, developed and maintained by deep learning researcher Jason Antic. DeOldify has undergone several iterations since the project opened in 2018.

AI technology in digital restoration has more applications than one might think

The above-mentioned image resolution supplementation, FPS enhancement, and color filling are the three sub-parts under the general direction of digital restoration, and the entire image restoration technology can be seen everywhere in the figure of artificial intelligence.

In the case of image restoration, the general steps are: inputting the image, detecting the screen information, obtaining all pixels of the screen and identifying the damaged area.

Calculate the pixel point priority for the damaged area and determine the pixel block with the highest priority to be repaired.

Calculate the error between the matching block in the source region and the region to be repaired and determine that the one with the smallest error value is the best match.

Fills and repairs, detects if the damaged area is fully repaired, and outputs an image if it is repaired.

In the case of video restoration, it evolved from image restoration, which is also frame-by-frame, so the process is similar to image restoration.

As for the image resolution boost, Denis handles it via Gigapixel AI software.

The actual situation of image resolution enhancement and resolution of image super-resolution involves many technical details, such as image alignment, image segmentation, image compression, image feature extraction, image quality evaluation, etc.

And research in these sub-directions is frequently seen at major AI summits.

Similarly, machine learning methods are used to extract high-frequency information models from the training sample set to make reasonable predictions about the information needed to populate the video to improve the resolution of the video image, and similar ideas are emerging.

In terms of applications, the popularity of HD devices has made the reworking of early games and movies a major demand, and the development of image restoration, image super-resolution and other restoration technologies also provide a sustainable solution for this market.