Apple today released ARKit 3.5, a new version of the AR framework that enables developers to use the new LiDAR scanner in the new iPad Pro.

The latest ARKit version features "geometric scenes", "real-time AR", and improved "motion capture" and "person occlusion" features.

Apple released an update on its developer website today:

ARKit 3.5 leverages the new LiDAR scanner and depth-sensing system on iPad Pro to support a new generation of AR applications that use Scene Geometry to enhance scene understanding and object occlusion.

With the instant placement of AR, improved motion capture, and people occlusion, the AR experience on the iPad Pro can now be made even better without writing any new code.

Scene Geometry

With Scene Geometry, you can use labels that identify floors, walls, ceilings, windows, doors, and seats to create a perspective view of a space. A deeper understanding of the real world unlocks object occlusion and the physical principles of the real world for virtual objects, and gives you more information to enhance your AR work scene.

Real-time AR

The LiDAR scanner on iPad Pro enables incredibly fast plane detection, allowing you to place AR objects instantly in the real world without scanning. iPad Pro automatically enables instant AR placement for all apps built with ARKit without any code changes.

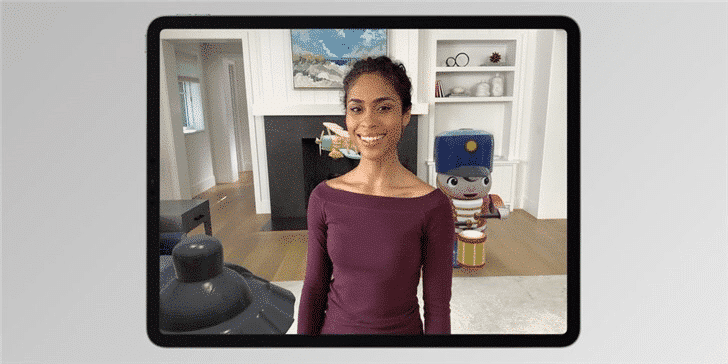

Improved motion capture and character occlusion

With ARKit 3.5 in the iPad Pro, depth predictions in People Occlusion and height estimates in Motion Capture are more accurate. These two features have been improved in all apps generated using ARKit on the iPad Pro, again without any code changes.