- Agibot has open-sourced its embodied AI dataset, Agibot World 2026, aiming to break industry bottlenecks with high-quality, real-world data.

- The move by Agibot seeks to address the data drought that is hindering the industry's development.

Agibot has open-sourced its embodied AI dataset, Agibot World 2026, to accelerate the training of robots in the real physical world.

The dataset is the first open-source collection covering the entire spectrum of embodied AI research, designed to let robots step out of the lab to breathe, learn, and evolve in the real world, according to an Agibot statement on Tuesday.

The humanoid robot industry is currently facing a "data drought" challenge, as existing low data volumes struggle to meet the interaction demands of the physical world.

SenseTime co-founder Wang Xiaogang predicted last month that the robotics sector still needs about two years of technological breakthroughs and data accumulation to reach its "ChatGPT moment."

The current bottleneck in the humanoid robotics industry is that data volume remains at the 100,000-hour level, which is insufficient for complex physical world interactions, he said at the time.

Agibot released Agibot World at the end of 2024, claiming it to be the embodied AI industry's first million-level dataset.

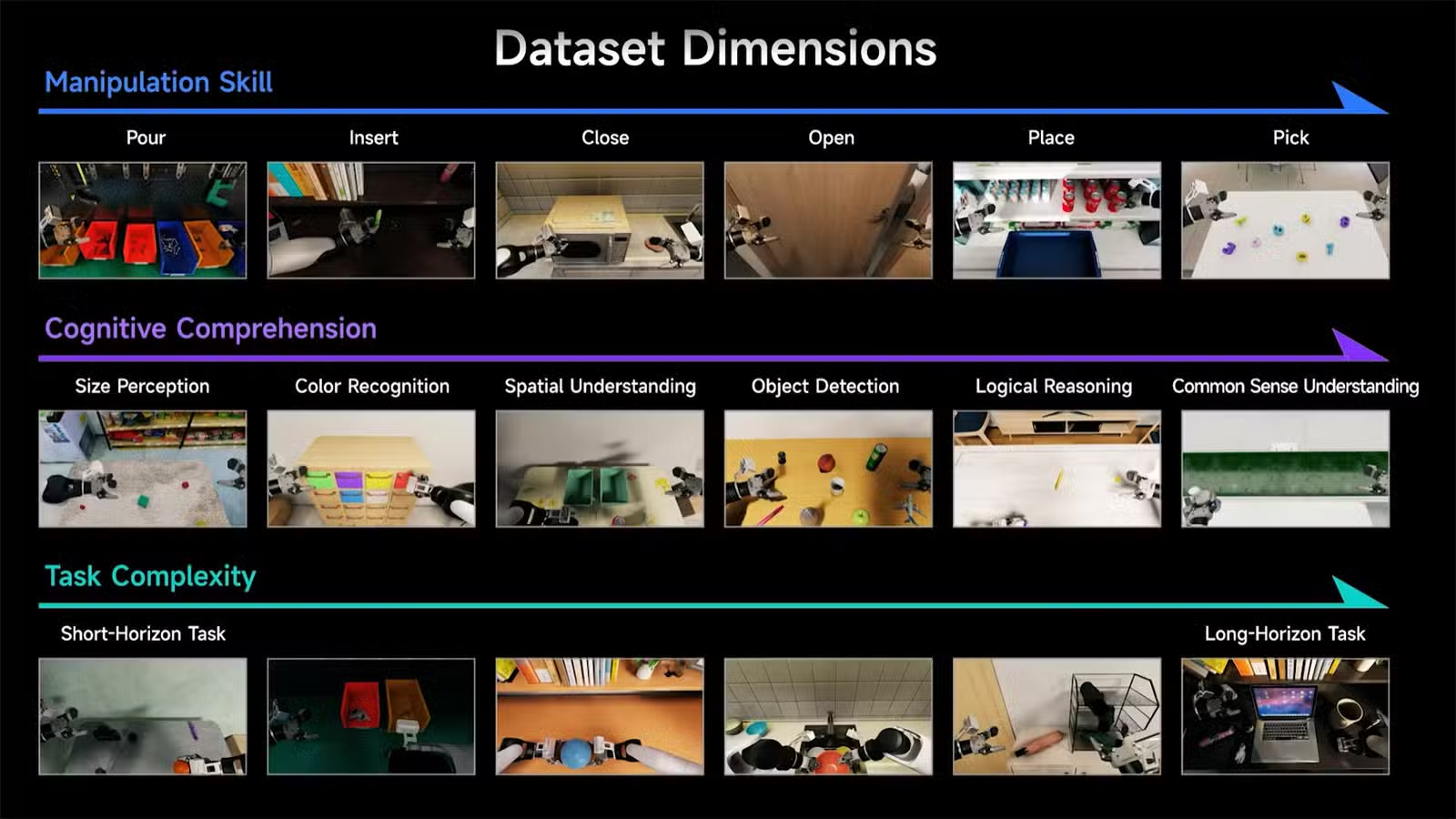

As research deepens, new demands have become clearer: researchers and developers no longer just need data volume, but high-quality data that truly captures the complexity of the physical world, the company said.

To meet the industry's data needs, Agibot World 2026 collected data from real-world scenarios including commercial spaces, homes, and industrial environments.

Agibot World 2026 completed its data collection using the Agibot G2 general-purpose robot, gathering unified multimodal data including vision, touch, and force feedback, according to the announcement.

The dataset provides a massive volume of data and emphasizes robots' real physical behavioral interactions by introducing whole-body control and beyond-visual-line-of-sight teleoperation technologies.

This collection paradigm, combined with a refined multi-level annotation system, gives the data natural generalization capabilities and practical application transfer value, the statement said.

Agibot World 2026 will be continuously open-sourced globally in five phases, with the first phase focusing on the research direction of "imitation learning."

Amid a competitive backdrop where companies are racing to boost their data reserves, Agibot's open-source initiative accelerates the commercialization process of embodied AI technologies.