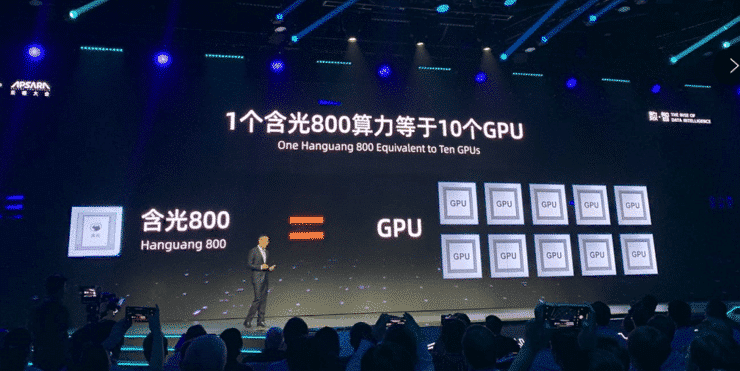

A year ago, Alibaba unveiled its first AI chip, the Hanguang 800, the most powerful AI reasoning chip at the time. The company recently shared an update on the Hanguang 800's progress nearly a year after its launch.

Long Xin, director of heterogeneous computing product development at Aliyun (Alibaba Cloud) said that Hanguang 800 NPU instances are now officially available for external service and can be purchased on Aliyun instances without whitelisting.

Hanguang 800 is not available to the public and its performance is exported through Aliyun.

It supports up to 8-core NPU and 96-core vCPU, 384G memory, network bandwidth up to 30Gbit/s, mainly for data center CNN type model inference acceleration, services including city brain, image video audit, Long Xin said.

Alibaba chipmaking arm expected to become a major TSMC customer

Long Xin said the Hanguang 800 features three aspects of hardware, including:

Deep optimization of CNNs and visual class algorithms.

Accelerated convolution and matrix multiplication with support for inverse convolution, hole convolution, 3D convolution, interpolation, and ROI.

Optimization for ResNet-50, SSD/DSSD, Faster-RCNN, Mask-RCNN, DeepLab, and other models.

High energy efficiency and low latency.

High density compute and storage, greatly reducing I/O requirements.

Soft-hard synergy supports sparse compression of weights and quantitative compression of computations.

The instruction set supports programmable model extensions.

Alibaba Cloud completes three new super data centers, will add over a million servers

In addition to INT8/INT16 quantization acceleration, it also covers FP16/BFP16 vector calculations that directly accelerate various ReLu, Sigmoid, Tanh, etc., as well as supporting new activation functions in the future.

Long Xin emphasized that Hanguang 800's applications are mainly on the data center and large end, focusing on CNN-like model inference acceleration, which can be extended to other DNN models. Currently, there are 4-11 times performance improvement compared to GPU in specific applications.

Long Xin said that in pedestrian detection application, 4-core Hanguang 800 can support 100-channel video, which is 4 times better than the mainstream GPU 25-channel inference performance.

In-vehicle detection, 4-core Hanguang 800 can support 85 video channels, which is 8.5 times better than the mainstream GPU supporting 10 channels of reasoning performance.

In the ResNet50 V2 model for content recognition applications such as live streaming, short videos, and product information streams, the Hanguang 800 (4-core) can reach a frame rate of 20,000 FPS, which is 11 times faster than the 1800 FPS performance of mainstream inference GPUs.

In the Inception V4 model, Hanguang 800 (4-core) can process frame rate up to 5000 FPS, which is 10.8 times higher than the 460 FPS performance acceleration ratio of mainstream inferred GPU.

Alibaba's chip arm is developing chips for wireless headsets, speakers